(Video) lecture 21: ML engineering “from scratch”#

Our data: Austin Animal Center is the largest no-kill animal shelter in the US.

Question and prediction task: The shelter wants to know, at the moment an animal walks in, which animals are at risk of a bad outcome (e.g. euthanasia, died, missing) versus a good outcome (e.g. adoption, transfer, return to owner). The goal is to help staff prioritize interventions: foster placement, medical care, or adoption listings.

We will see two new pandas concepts:

pd.mergefor combining two tables on a shared keyMissingness with

.isna()/.fillna(), plus one-hot indicators for missing categoricals

0. Imports#

We begin with our usual imports:

1. Data#

We have two CSV files:

intakes.csv, one row per animal, with information recorded at intake time (species, sex, intake type, condition, age, breed, color)outcomes.csv, one row per animal, with the outcome of their shelter stay (adopted, transferred, euthanized, etc.)

We’ll need to combine them to build our dataset.

New concept: pd.merge#

Pandas has a merge() method that works like a SQL join. Given two DataFrames and a shared key column, it lines up rows that have matching keys.

# Toy example: left table has features, right table has labels.

left_df = pd.DataFrame({

"id": ["A", "B", "C", "D"],

"color": ["red", "blue", "green", "red"],

})

right_df = pd.DataFrame({

"id": ["A", "B", "C"],

"label": [1, 0, 1],

})

left_df.merge(right_df, on="id", how="inner")

The how="inner" argument keeps only rows where the key appears in both tables, so that row "D" disappears from the output.

The full merge options are:

"inner": keep only rows where the key appears in both tables"left": keep all rows from the left table, and only matching rows from the right table"right": keep all rows from the right table, and only matching rows from the left table"outer": keep all rows from both tables, and fill missing values withNaN

For our shelter data we want inner: we only care about animals where we know both the intake info and the final outcome. Let’s merge on Animal ID:

2. Exploring the data and building our target#

The merged table has the features and the labels, but our target doesn’t exist as a clean binary column yet, so we have to build it. Look at the outcome column first:

Building the binary target#

We’ll call an outcome “bad” if the animal was euthanized, died, disposed of, reported missing, or lost. Everything else (adopted, transferred to a rescue, returned to owner, relocated) we’ll call “good”.

Like other class labels we’ve seen, this can be subjective! “Transferred” could mean a no-kill rescue partner (good) or another crowded facility (less good).

Tip

Pressing shift-tab in the notebook will (sometimes) show the docstring for the object under your cursor.

We then need to check for missing outcome values:

Class imbalance takeaway

Around 8% of animals have a bad outcome, so the classes are imbalanced. Accuracy will not be a good metric for our models, and instead we should use AUC to evaluate them.

Missingness in data#

In practice, most real datasets have gaps. Pandas represents missing values as NaN. Two quick helpers for spotting it:

df.isna()returns a boolean DataFrame the same shape asdf, withTruewherever a value is missingdf.isna().sum()gives you a per-column count of missing values (sinceTruesums as 1)

When we build X, we also pass dummy_na=True to pd.get_dummies so missing categoricals get explicit _nan columns instead of only all-zero level dummies.

toy_df = pd.DataFrame({

"name": ["Bella", "Speedy", "King"],

"age": [3, None, 7],

"breed": ["Lab", "Tabby", None],

})

Missingness in data

Most columns are complete, but Intake Condition has about 1,200 missing values (~6% of rows). Here are a few reasonable choices:

Drop the rows: okay if the missingness is random or a small fraction of the data

Drop the column: overkill, because the non-missing rows still carry real signal

Encode missing in the dummy expansion: adds explicit

_nanindicator columns for categoricals with missing entries, without picking a fake string label first

Missingness itself can be an informative feature: for example, astaff member in a rush might skip the field more often for healthy-looking animals.

One last look before we build features: is Intake Condition actually predictive of outcome?

3. Features and train/test split#

Now we build the feature matrix X and label vector y.

Important: we can only use information available at intake time.

We’ll drop columns Breed and Color because they have too many unique values to use get_dummies on.

Parsing age#

Age upon Intake is a string like "2 years" or "3 weeks", but ML models want numbers.

We’ll write a small helper that parses any of these formats into days, then use .apply() to run it over the whole column:

One-hot encoding the categorical columns#

Let’s look at the four categorical columns we’ll use:

Animal TypeSex upon IntakeIntake TypeIntake Condition

Four categorical columns (Animal Type, Sex upon Intake, Intake Type, Intake Condition) all have a small number of observed levels, so pd.get_dummies can be used to generate features. We also set dummy_na=True so any NaN in those columns becomes an explicit missing-indicator column (for example Intake Condition_nan).

4. Model, train, evaluate#

A first baseline: how far does one feature take us?#

Before we use all our features at a model, let’s see how well a single feature does.

We’ll fit a logistic regression on age_days alone and measure ROC-AUC on the test set.

Adding all the intake features#

We’ll rerun the same logistic regression, but now give it every feature we built in section 3.

Another model: Random Forest#

Comparing all three models with ROC curves#

Let’s put them on one ROC plot to see the rankings side by side.

feature performance takeaway

We should see a big jump from one feature to all features in the logistic regression, from about 0.60 to about 0.90.

We see minimal improvement from the random forest.

Getting features into a usable shape, or collecting more features, sometimes matters more than swapping the ML model type – especially in this case where there are only a few features.

5. Reproducibility check#

An important habit for code hygiene: every time you finish a notebook, restart the kernel and run all cells from top to bottom. This prevents:

Cell-order bugs. Defining a variable in cell 12, then modified it in cell 5 after scrolling up. The notebook as a linear script no longer reproduces your results.

Hidden randomness. Forgetting to set

random_statesomewhere. Restart + run all lets you verify the final metric is stable across runs.

Keep random_state=42 on every step that involves randomness to ensure reproducibility: train_test_split, LogisticRegression, RandomForestClassifier

We’ll use Kernel -> Restart Kernel and Run All Cells to let the notebook rerun top to bottom.

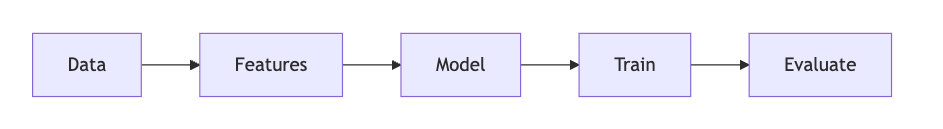

Wrap-up#

Data: two raw CSVs, merged with

pd.mergeon a shared key.Features: target engineering (collapsing outcome categories into binary), handling missingness, categorical encoding with

pd.get_dummies, custom parsing for the age string.Model + Train + Evaluate: three models compared on ROC-AUC: a one-feature LR, a full-feature LR, and a Random Forest.

Reproducibility: seeds, restart and run all, assertion on the final metric.